The Intel Inside for BCI

Real-time, Explainable Neural Inference

What is a BCI?

A Direct Pathway Between Brain and Machine

A Brain-Computer Interface (BCI) is a technology that captures brain signals, analyzes them, and translates them into commands that are relayed to an output device to carry out a desired action.

Essentially, BCI allows for the direct control of computers or other devices using only thought.

Non-Invasive BCI

(e.g., EEG Headset)

Invasive BCI

(e.g., Neural Implant)

Slide 3: The Problem

The BCI Revolution Faces a Bottleneck

- High Latency: A 100–300ms gap between thought and action breaks immersion and usability. At that delay, the brain perceives a disconnect — making low latency not a feature, but a hard requirement for any viable BCI product.

- Low Adaptability: Static models can't track the brain's changing states. Retraining costs $10K–$50K per session clinically, plus weeks of engineering time — making iteration prohibitively expensive.

- The "Black Box" Issue: Uninterpretable models block regulatory approval and erode user trust.

Together, these bottlenecks stall adoption across medicine, assistive technology, and immersive VR/AR.

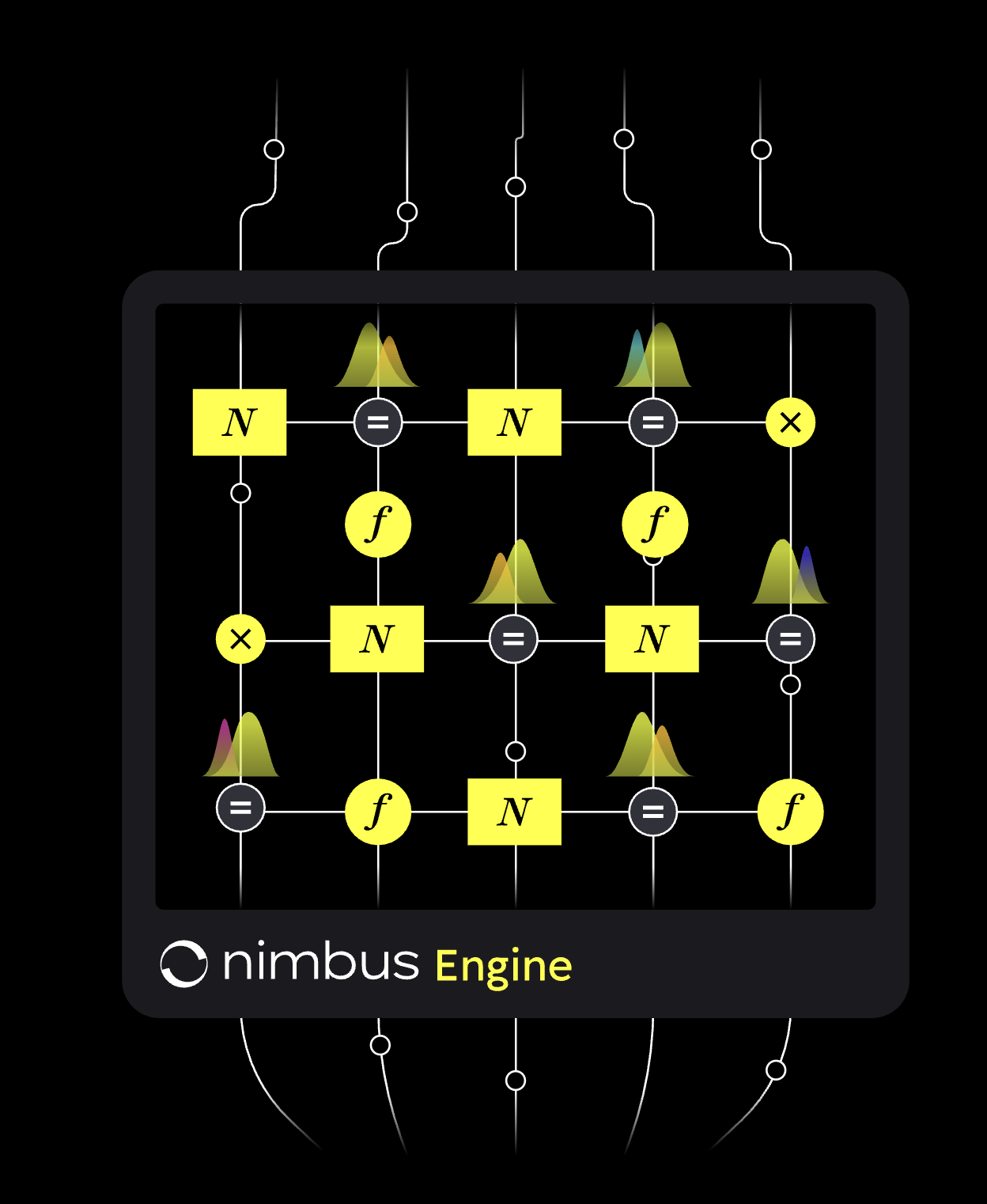

Slide 4: Our Technological Core

Introducing Nimbus SDK: The Reactive Inference Engine

Nimbus SDK is a production-ready inference engine built for real-time brain signal processing. Unlike static ML models, it runs probabilistic models that continuously update as new data arrives — adapting to the brain's changing states without retraining.

At its core, Nimbus SDK uses reactive message passing on factor graphs — a technique pioneered by our team through RxInfer, an open-source Bayesian inference library developed by Lazy Dynamics, our co-founding company.

docs.nimbusbci.com →<10ms Inference Latency

Real-time processing of EEG and neural signals with sub-10ms end-to-end latency.

Continuous Adaptation

Models update on every new sample — no retraining cycles, no downtime.

Interpretable by Design

Probabilistic outputs with uncertainty estimates — built for regulatory transparency.

Slide 5: The Competitive Landscape

Why BCI Processing Methods Fall Short

| Criterion | LDA | SVM | Deep Learning | Bayesian Inference on NimbusSDK |

|---|---|---|---|---|

| Real-time Performance | High Very fast for simple, linear problems. | Medium Slower inference, especially with complex kernels. | Low Computationally heavy, often requires offline processing. | High (Reactive) Designed for streaming data and continuous updates. |

| Adaptability | Low A static model that must be fully retrained. | Medium Can be adapted, but it's often complex and inefficient. | Medium Requires large new datasets and extensive retraining. | High (By Design) Continuously learns and updates from new data points. |

| Robustness (to noise) | Low Highly sensitive to outliers and noisy signals. | Medium More robust than LDA but can still be affected. | Medium Can learn to ignore noise but requires vast data. | High (Explicit Modeling) Models uncertainty directly, making it resilient to noise. |

| Interpretability | High Feature contributions are clear and understandable. | Medium-Low Becomes a "black box" with non-linear kernels. | Very Low ("Black Box") Decision-making process is completely opaque. | Very High ("White Box") The entire model structure and reasoning is transparent. |

Slide 6: The Solution: RxInfer Inside

Real-time, Explainable Inference for BCI

We are building an SDK/API powered by RxInfer to serve as the core processing engine for the next generation of BCI devices.

Factor Graphs

For "white box" models that regulators and users can trust.

Variational Inference

For scalable computation balancing accuracy and speed.

Reactive Message Passing

For adaptive, real-time performance on streaming data.

The result: We reduce BCI latency from over 200ms to a target of 10-20ms.

Slide 7: Product Offering

The "Intel Inside" for Neurotechnology

- A Core Engine: A powerful inference engine deployable in the cloud or at the edge.

- Pre-built Models: A library of models for common BCI paradigms (e.g., motor imagery, SSVEP, P300).

- Developer Tools: A comprehensive SDK and API for seamless integration into any neurotech stack.

Slide 8: Visual Pipeline Builder

Nimbus Visual BCI Pipeline Generator

⚡ Key Features:

Slide 9: Nimbus Studio Competitors

Why Existing Tools Fall Short

| Alternative | Limitation | Nimbus Advantage |

|---|---|---|

| NeuroPype | $2,000–$5,000/year, desktop-only, no embeddable SDK | 10× cheaper, web-based, embeddable SDK for hardware partners |

| OpenBCI GUI | No ML pipeline, no model training, visualization only | Full ML pipeline from offline training to real-time deployment |

| MNE-Python | Code-only, requires deep Python expertise, no visual interface | Visual drag-and-drop interface accessible to non-coders |

| MATLAB / BCILAB | Expensive license ($2K–$5K/year), legacy codebase, no cloud | Modern cloud-native stack, no license fees, open integration |

| Custom dev team | $120K+/year per developer, 12–18 months to first working prototype | SDK co-development at a fraction of the cost, 6× faster to market |

No existing tool combines a visual interface, Bayesian probabilistic outputs, and an embeddable hardware SDK in a single platform.

Slide 10: Target Customers

Three Validated Customer Segments

Researchers, PhD Students & BCI Consumers

BCI Hardware Startups

Medical Device & Clinical Teams

Slide 11: Market Size & Growth

A $400 Billion Market Opportunity in the US Alone

$80.8B

Early TAM

~2.8M patients with critical impairments (ALS, stroke, SCI, epilepsy, depression)

First-wave adopters — horizon: 2035

$320B

Intermediate TAM

~6.8M follow-on adopters with moderate impairments as technology matures

Follow-on wave — horizon: 2045

~$400B

Total US TAM

Combined addressable market for BCI implants in the United States

Source: Morgan Stanley BCI Primer, 2024 →▶ Nimbus Addressable Segment — The Software Layer Inside Every BCI Device

$50–100M

SAM Today (2025)

Developer tools & pipeline software for BCI labs and researchers

$500M–$1B

SAM at 5% BCI Penetration

SDK royalties + Studio SaaS as BCI devices reach early clinical scale

$1B–$2B+

SAM at Market Maturity

Per-unit royalties across millions of deployed BCI devices globally

Slide 12: Business Model

Two Independent Revenue Streams

Nimbus Studio

Freemium SaaS — researchers & BCI consumers

$49 – $199

/month per seat

- ✓Free tier — unlimited pipeline building, community support

- ✓Pro ($49/mo) — real-time execution, export, priority support

- ✓Team ($199/mo) — shared workspaces, advanced models, SLA

✓ 80+ on waiting list — zero paid marketing

Nimbus SDK

B2B co-development — hardware startups

$20K – $150K

per contract + equity/royalties

- ✓9-month co-development — full team + advisors access

- ✓RxInfer Pro license — embedded in partner hardware

- ✓Per-unit royalty — recurring revenue on every device shipped

✓ 2 signed LOIs: Miruns & BrainBit+PiEEG

Slide 13: Partnership Model

Why Partner with Nimbus?

EEG Hardware

Your specialized sensors and acquisition systems

Nimbus Software Layer

Real-time inference engine with explainable AI

Applications

Medical devices, gaming, AR/VR, assistive tech, meditation, stress monitoring

Complete Computational Expertise

No need to hire expensive AI/ML specialists. We provide the entire software stack.

Faster Time-to-Market

Launch products 6-12 months faster. Focus on hardware while we handle software.

Cheaper than In-House

Save 60-80% compared to building an internal team.

All Benefits of RxInfer

10x latency reduction, explainable AI, real-time adaptability.

Slide 14: Value Proposition

Why We Win

- 10x Performance Boost: A massive reduction in latency creates a fundamentally better user experience.

- Regulatory Advantage: Our "white box" approach provides the explainability required for FDA/CE approval.

- Operational Efficiency: High adaptability eliminates the need for frequent, expensive retraining campaigns.

Slide 15: Traction

Early Validation — Before Funding

80+

Waiting List

Researchers & labs signed up with zero paid marketing

2

Signed LOIs

Miruns & BrainBit+PiEEG — hardware SDK co-development

✓

Live Product

Nimbus Studio publicly accessible with working pipeline builder

RxInfer.jl — 500+ GitHub stars, used in 10+ academic publications

The inference engine powering Nimbus already has proven scientific traction

Advisory board with Jeff Beck (Duke), Ryan Smith (LIBR), Bert de Vries (TU/e), Alexander Kuck

World-class scientific and industry validation secured

Nimbus SDK & Nimbus Studio — production-ready

Both core products are built and available for partner integration today

Slide 16: Revenue Projections

Path to $1.1M+ ARR by Year 3

Year 1 (2025)

$20K

Studio: Free beta — 0 revenue

SDK: 1 co-dev contract ($20K)

Year 2 (2026)

$120K

Studio: 20 Pro seats — $20K/yr

SDK: 2 contracts — $100K

Year 3 (2027)

$1.1M+

Studio: 300 seats — $770K/yr

SDK: 3+ contracts — $300K+

Upside Scenario: $4.5M ARR by Year 3

If one SDK partner ships 10,000+ devices with per-unit royalty, or enterprise clinical tier launches on schedule in Q4 2026, cumulative Year 3 revenue reaches $4.5M. This is structurally similar to the Arm licensing model applied to BCI hardware.

Slide 17: Long-Term Vision

The Figma of BCI

Years 1–3 — Foundation

Establish Nimbus Studio as the standard tool for BCI research. Sign 5+ SDK hardware partners. Reach $1M+ ARR.

Years 3–5 — Scale

Launch enterprise clinical tier. Expand SDK royalty base to 50K+ devices. Build a community marketplace of BCI pipeline templates.

Years 7–10 — Platform

Become the default inference layer for BCI hardware globally — the “Intel Inside” of neurotechnology. Target: $50M+ valuation.

$50M+

Target valuation by Year 7–10

● Every BCI device ships with Nimbus SDK

● Researchers worldwide use Nimbus Studio

● Nimbus = the standard for explainable neural inference

Slide 18: The Team

Our Founding Team & Expertise

Slide 19: Advisory Board

Guidance from World-Class Scientists

For context: Yann LeCun (ex-Meta Chief AI Scientist) averages ~50K citations/year on Google Scholar.

Jeff Beck

Professor of Computational Neuroscience

Duke University

7,169 total citations

~360/year • h-index 28

Computational neuroscience & Bayesian brain models. Co-author of landmark paper cited 1,955×.

Ryan Smith

Research Associate Professor

Laureate Institute for Brain Research

8,229 total citations

~1,400/year • h-index 48

Active Inference & BCI translation. Collaborates with Karl Friston (UCL).

Bert de Vries

Professor of Signal Processing & ML

Eindhoven University of Technology

3,603 total citations

~95/year • h-index 26

Co-founder of GN Hearing (Jabra). Creator of RxInfer.jl — the core engine of Nimbus.

Alexander Kuck

Principal Engineer

Medtronic

Industry practitioner

Neurotechnology & medical devices

Expert in BCI hardware integration, medical device software, and neurotechnology commercialization.

Thank You

Let's build the future of neurotechnology together.